A Year Passed By #

Exactly one year ago, I published my first blog post where I shared my setup as I was getting into local LLMs and generative AI tools. I mentioned at the time that this was my baseline, and that I’d share how things change. A fair bit has happened since then, so let’s see where things stand.

The Hardware #

My PC is exactly the same as it was a year ago - I haven’t changed a single component. I bought it in April 2025 because I expected prices to climb as Windows 10 support ended in October, forcing people with older hardware to upgrade. I figured the rush would push component prices up, and I wanted to get ahead of it.

Turns out I was right that prices would go up, but I was wrong about why.

Out of curiosity, I went back to CyberPowerSystem and tried to spec out the same build. The GPU isn’t an exact match as they now only offer the ASUS ROG Astral RTX 5090, which costs roughly £300 more than my TUF Gaming variant - but even accounting for that, the same PC would cost around 30-35% more than what I paid twelve months ago. My salary is not keeping up with that level of change :)

RAMmageddon #

The tech press has been calling it “RAMmageddon” and “RAMpocalypse”, and the names are deserved. A 32GB DDR5-6000 kit that cost around $90 in early 2025 now goes for roughly $500, which is over a 4x increase. In the UK, prices multiplied by about 3.5x between September 2025 and January 2026 alone1, and some DDR5 profiles saw increases of over 400%2.

Most of this comes down to AI infrastructure demand. OpenAI’s Stargate Project alone would reportedly consume up to 40% of global DRAM output3, and the big cloud providers - Google, Amazon, Microsoft, Meta - placed open-ended orders, essentially hoovering up supply at any price. On top of that, manufacturing HBM (the memory used in AI accelerators) shifted production away from consumer memory. Add US-China trade restrictions, tariffs, and Micron exiting its consumer “Crucial” brand entirely, and you’ve got a perfect storm.

So I was right to buy when I did, even if it was for completely the wrong reasons.

Ollama #

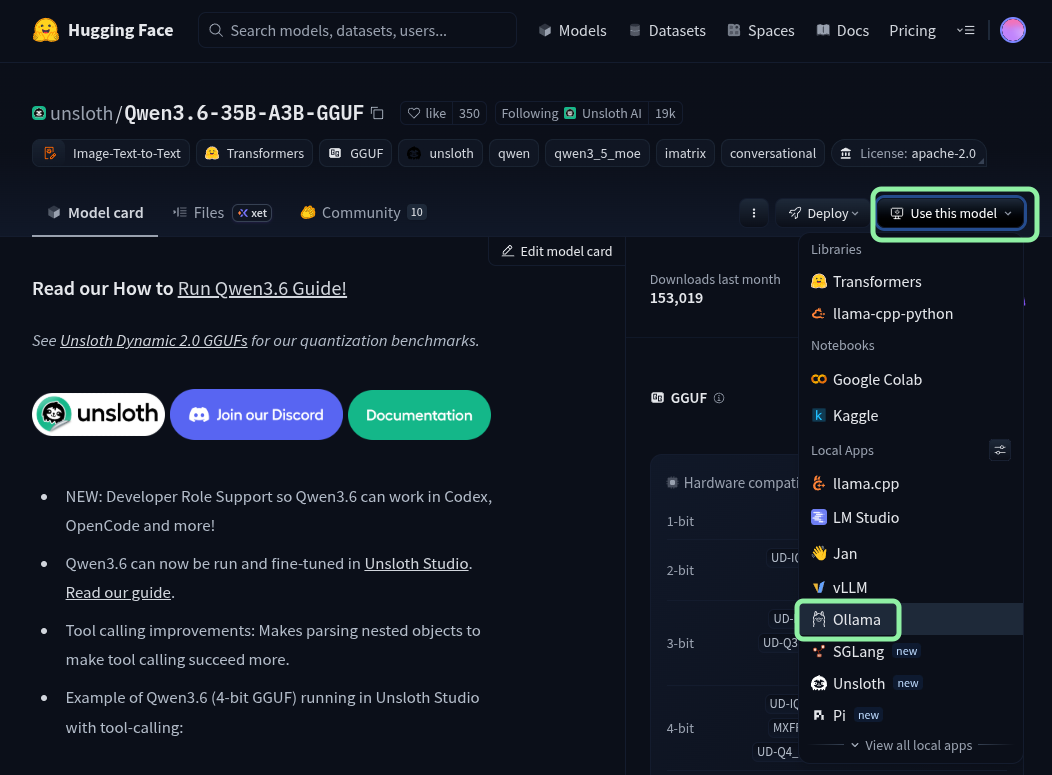

I’m still using Ollama often, though as a user I haven’t noticed much difference other than more models being available. The biggest discovery for me this year was realising I could pull models directly from Hugging Face, not just the ones listed on Ollama’s website. Not all models will work properly, but for the ones that do, it means much easier access to model variants to experiment with. Unsloth has been particularly useful here - they provide optimised, quantised versions of popular models that run well on consumer hardware, and most of the GGUF models I pull from Hugging Face are their work (for example: Qwen3.6-35B-A3B-GGUF).

Model-wise, I’ve been mostly running Qwen3-Coder, which has been excellent for coding tasks. I also had a look at Qwen3.5, though it wasn’t reliably stable enough for my workflow. Qwen3.6 dropped this week, so I’ll be testing it in the coming days.

Open WebUI #

When I wrote the baseline post, I expected Open WebUI to become my main interface for interacting with local models. In reality, I quickly realised I wasn’t using it nearly as much as I thought I would. Most of my interaction with LLMs turned out to be coding-related, and doing that inside a chat window with copy-paste just doesn’t cut it. I’ve moved almost entirely to editor-based tools (more on that below), and while I still fire up Open WebUI occasionally for quick tests, it never became part of my daily workflow.

VS Code → Claude Code → Kiro #

In my baseline post, I was using VS Code with Continue, but as I mentioned in my Claude Code post, Continue never quite clicked for me - the workflow never felt right, and the local models at the time weren’t impressive enough to make it worthwhile.

What changed things was Claude Code paired with Qwen3-Coder running locally via Ollama. Having an agentic coding assistant that could actually understand context, edit files, and run commands while using a local model was a proper step change. I wrote about that experience in detail here.

More recently, I’ve started using Kiro, which is what I’m using right now to help me write this very post. Kiro is developed by AWS and has access to the latest Anthropic models, so the jump in performance compared to local models is significant. I’ll cover Kiro properly in an upcoming post, as there’s quite a lot to say about it.

Obsidian #

I’m still a heavy Obsidian user, and the self-hosted live sync plugin remains essential. Having my notes available across all my devices without relying on a third-party service, with full offline support, is something I won’t give up.

What I did drop is the Obsidian Copilot plugin. It turns out I don’t have many use cases where I need AI assistance inside my note-taking app - when I do need an LLM, I’m already in my editor or terminal, so keeping things simple has worked out better.

ComfyUI #

ComfyUI is still my go-to for image generation, and the workflow-based approach remains unmatched for flexibility. I’ve tried a few models since moving away from Flux, including Stable Diffusion XL, but Qwen Image has been the best fit so far.

The Blog Itself #

The blog has changed a bit since the baseline post:

- Text-to-speech: Every post now has audio narration in two voices, powered by Kokoro. That little audio player at the top didn’t exist a year ago.

- Microblog / Thoughts: I added a thoughts section for short-form posts, the kind of thing you’d normally throw on social media. I automated the pipeline using Postiz and n8n, which I wrote about here.

- This very post was drafted with AI assistance directly in my code editor, rather than copying text back and forth into ChatGPT like I used to.

To Wrap Up #

Looking back, while the core tools are largely the same, the way I use them has fundamentally changed. AI tools have become part of my everyday life - both at home and at work (more on that in a future post). The way I write posts, develop my blog, and approach coding has completely changed within a year.

-

DropReference - DDR5 RAM Shortage: Complete review of price explosion in 2025 and outlook for 2026. Analysis of 1,100+ DDR5 SKUs across 8 countries. UK prices multiplied by x3.55 from September 2025 baseline. ↩︎

-

Same DropReference analysis. DDR5-5600 CL36 32GB kits increased from $111 to $554 (+399%) between September 2025 and January 2026. ↩︎

-

Wikipedia - 2024-present global memory supply shortage, citing Reuters and TrendForce. ↩︎